Trustworthy Machine Learning Lab

Artifical Intelligence and Machine Learning models have been widely accessible with significant advancements on hardware and improvements of training methods on large datasets. With prevalent usage and deployment on various real-life applications bring trustworthiness issues as such systems make decisions that impact our lives.

As the Trustworthy Machine Learning Lab, we investigate a wide range of trustworhiness dimensions such as biases, fairness, domain knowledge gain, explainability, privacy, safety and robustness.

Lab Members (including past contributors)

- Ramazan Aygun, Director

- Mallika Boyapati, Ph.D. student

- Ayomide Afolabi, Ph.D. student

- Duleep Prasanna Rathgamage Don, Ph.D. student

- Neha Bhargava, MSCS (2022)

- Lokesh Meesala, MS student

- Lokesh Veeravalli, MSCS (2022)

- Sudhashree Sayenju, Ph.D. (2023)

- Jonathan Boardman, Ph.D. (2022)

Sample publications:

- S. Sayenju et al., "Quantifying Domain Knowledge in Large Language Models," 2023 IEEE Conference on Artificial Intelligence (CAI), Santa Clara, CA, USA, 2023, pp. 193-194, doi: 10.1109/CAI54212.2023.00091.

- Boyapati, Mallika, and Ramazan Aygun. "Explainable Machine Learning for Default Prediction on Commercial Credit Big Data Using Graph-based Variable Clustering." Encyclopedia with Semantic Computing and Robotic Intelligence (2023).

- S. Sayenju, R. Aygun, B. Franks, S. Johnston, G. Lee and G. Modgil, "Stereotype and Categorical Bias Evaluation via Differential Cosine Bias Measure," 2022 IEEE International Conference on Big Data (Big Data), Osaka, Japan, 2022, pp. 5082-5089, doi: 10.1109/BigData55660.2022.10020924.

- Sayenju, Sudhashree, Ramazan Aygun, Jonathan Boardman, Duleep Prasanna Rathgamage Don, Yifan Zhang, Bill Franks, Sereres Johnston, George Lee, Dan Sullivan, and Girish Modgil. "Quantification and Mitigation of Directional Pairwise Class Confusion Bias in a Chatbot Intent Classification Model." International Journal of Semantic Computing 16, no. 04 (2022): 497-520.

- N. Bhargava, Y. J. K. Kamgaing and R. Aygun, "ImpartialGAN: Fair and Unbiased Classification," 2022 IEEE 23rd International Conference on Information Reuse and Integration for Data Science (IRI), San Diego, CA, USA, 2022, pp. 152-157, doi: 10.1109/IRI54793.2022.00043.

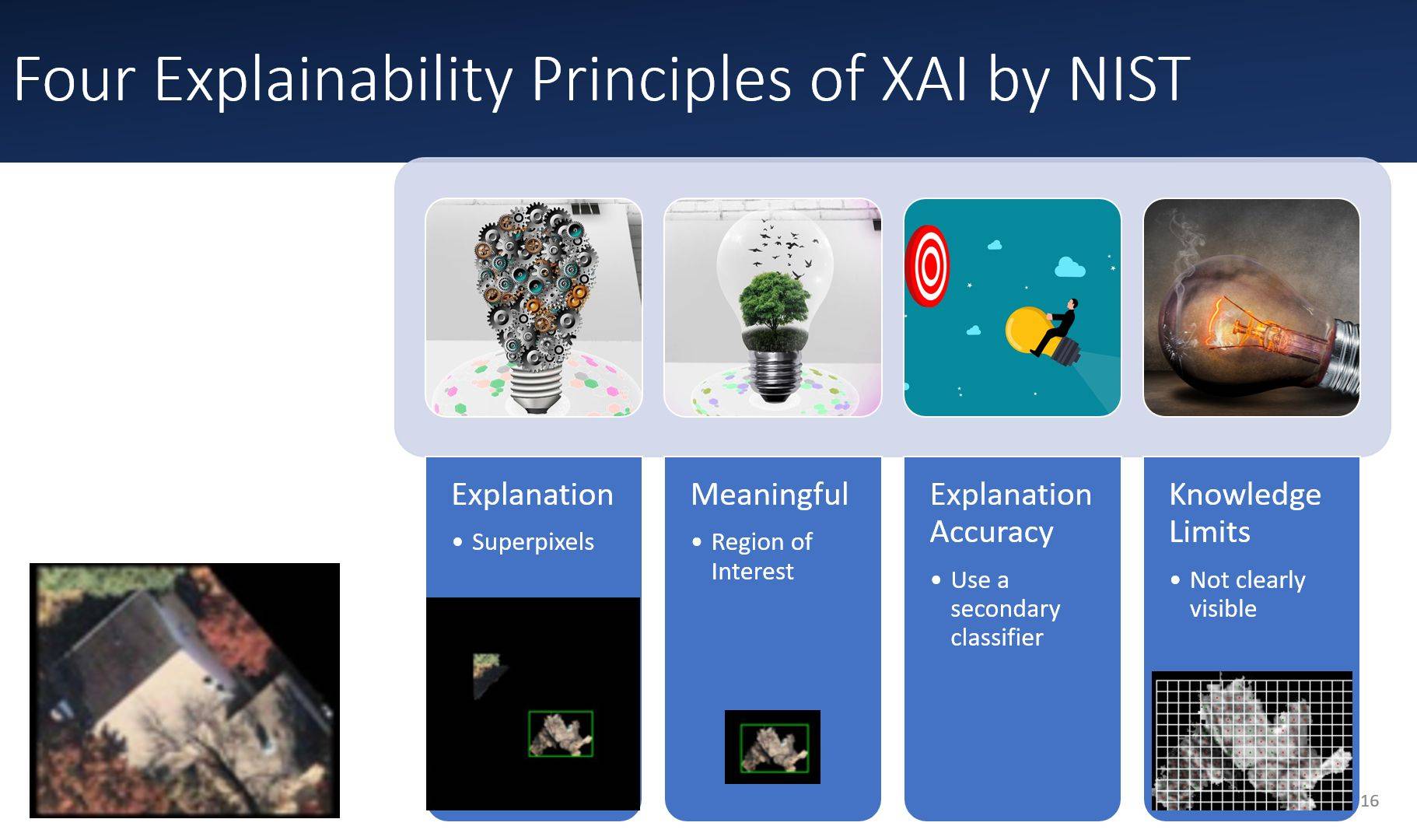

- D. R. Don et al., "Explainability Audit: An Automated Evaluation of Local Explainability in Rooftop Image Classification," 2022 IEEE 23rd International Conference on Information Reuse and Integration for Data Science (IRI), San Diego, CA, USA, 2022, pp. 184-189, doi: 10.1109/IRI54793.2022.00049.

- M. Karakaya, R. S. Aygun and A. B. Sallam, "Collaborative Deep Learning for Privacy Preserving Diabetic Retinopathy Detection," 2022 44th Annual International Conference of the IEEE Engineering in Medicine & Biology Society (EMBC), Glasgow, Scotland, United Kingdom, 2022, pp. 2181-2184, doi: 10.1109/EMBC48229.2022.9871617.

- S. Sayenju et al., "Directional Pairwise Class Confusion Bias and Its Mitigation," 2022 IEEE 16th International Conference on Semantic Computing (ICSC), Laguna Hills, CA, USA, 2022, pp. 67-74, doi: 10.1109/ICSC52841.2022.00017.

- Tran, T.X., Aygun, R.S. WisdomNet: trustable machine learning toward error-free classification. Neural Comput & Applic 33, 2719–2734 (2021). https://doi.org/10.1007/s00521-020-05147-4

- Mercan, S, Cebe, M, Aygun, RS, Akkaya, K, Toussaint, E, Danko, D. Blockchain-based video forensics and integrity verification framework for wireless Internet-of-Things devices. Security and Privacy. 2021; 4:e143. https://doi.org/10.1002/spy2.143